The Musketeer motto, “All for one, and one for all!” was a reminder that people are stronger when they work together. Musketering works for problems as well; bringing them together makes them stronger and harder to resolve. This is a case study where three unrelated problems: Data Latency, Data Quality, and UX were used to justify a full rewrite.

The All For One mentality, resulted in a team working hard and delivering no customer value for 12 months. The team was only able to deliver once they pivoted from Musketering and began attacking the problems separately.

The Problem: Slow, Ugly, and Inconsistent Reports

Our reporting pages had three problems:

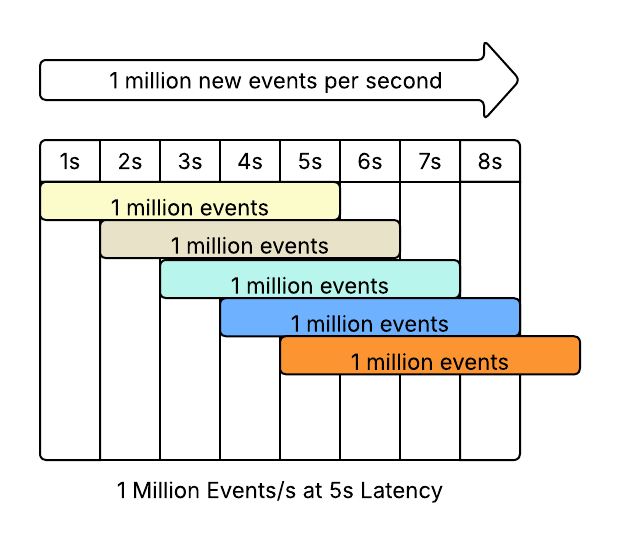

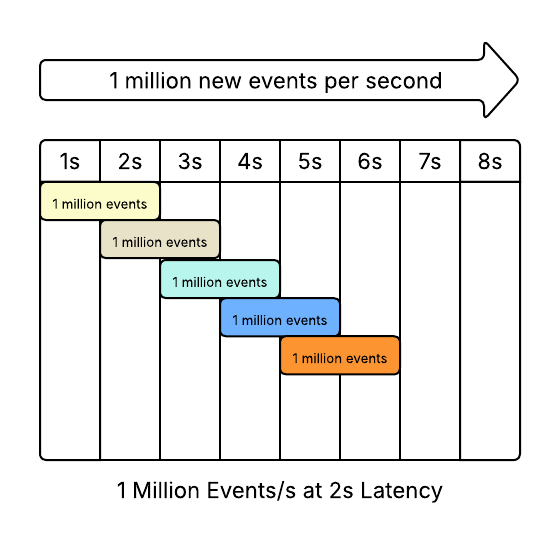

- Data Latency - The data loaded extremely slowly. Our biggest customers could wait up to 5 minutes for a report to load.

- Data Quality - The data was inconsistent with itself. The campaign report might claim that 1,000 people opened an email while a list of everyone who opened the email would only have 995 people.

- UX - The reports were extremely outdated. The UX hadn’t been refreshed in 8 years and the front end code was written in Ember, a dead frontend language. The company’s overall frontend had been iteratively migrating to React for years, reports was one of the last remaining pieces.

Addressing each issue would be a major undertaking, the team decided to tackle all 3 at once.

Insight: The three issues have very little to do with each other. The data being slow was unrelated to the data being inconsistent. The outdated UX had nothing to do with the available data.

Project Plan: New Data Stream, New Data Store, New UI - In 6 Weeks

The plan called for:

- All events to be published to kafka

- All data to be stored in Snowflake

- A new backend service to handle requests written in Java

- New Reports in React

At a high level the technical choices appeared reasonable. None of the technology was new to the company. Kafka was already in use and the new events wouldn’t add a significant amount of events onto the kafka stream. There were several backend Java services. More than 75% of the Ember pages had already been migrated to React. Snowflake was available to some customers as an add-on feature.

The choices became unreasonable when all of the moving pieces were to be completed in 6 weeks.

All For One - This Is Blocked By That

The UI couldn’t proceed until the Java service was set up. The java service couldn’t be built until there was data in Snowflake. Data couldn’t get in Snowflake until the events were published to Kafka.

There was a natural order to the work; there was also a 6 week timeline.

Could the UI begin development using dummy data? Absolutely!

Would the UI require tons of rework when it came time to integrate with the Java service? Absolutely!

The tight coupling would rattle timelines up and down the stack as team members working in isolation made new discoveries. Each group was working on the entire scope of the project - all of the UI, all of the java service, all of the Snowflake data. The project was set up to deliver everything, or nothing - All For One, One Release For All!

One For All! After Nine Months, One Release

Nine months into the six week project, the reports were ready to be released to customers.

Data events were published to Kafka! The Kafka stream was consumed by Snowflake! The UI was in React! There was a Java service acting as a middle layer between Snowflake and the React frontend.

Customers in the beta group were generally happy with the new reporting experience. All was well, until the AWS bill came in and was 20x more than expected.

Tight Coupling And Tight Deadlines Left No Room For Changes

It turned out that Snowflake was the wrong tool for the job. This is not Snowflake’s fault, any more than a hammer is to blame for hammering a screw.

The report needed unique ids in time series data, which isn’t something Snowflake is designed to support. To get sets the developers added multiple array columns and did in memory operations to ensure that all of the array entries were unique.

This was extremely computationally expensive.

Because the project plan was supposed to deliver in 6 weeks the developers had to use an existing data store. Because the project was late, there was no room to rethink the decision to use Snowflake.

Divide And Conquer

After 9 months of development, the new report had been released, and rolled back. With Snowflake prohibitively expensive, the team needed a new plan.

Remember, the plan had called for:

- All events to be published to kafka

- All data to be stored in Snowflake

- A new backend service to handle requests written in Java

- New Reports in React

Without Snowflake there was no need to publish data to kafka, or a new Java service.

The team switched from Musketering to Divide And Conquer.

First, the team built new endpoints on the original service, using the original database. It was slow, because the original system was slow. It had inconsistencies, because the original system had inconsistencies. The beautiful new UX was still beautiful.

Second, the team made the reports efficient and fast by adding rollup tables to the original database. This made the report fast as well as beautiful.

Finally, the team worked on the data inconsistencies. The inconsistencies were caused by race conditions and other concurrency issues. There was a source of truth, and the team decided to mitigate the issue by periodically synching against the source of truth.

From start to finish the pivot took 3 months. The final version was fast, consistent, and beautiful. The rollup tables and periodic sync increased database load by a small amount, well within the budget.

Musketering Delays Delivery

Bringing multiple problems together helps make the case for a rewrite more compelling. It also creates unnecessary coupling within a project. Changes create huge delays which discourages revising the plan.

In this case almost everything written for the new reporting experience was thrown away. Snowflake, because it was too expensive. The Java Service and Kafka events because they existed to feed Snowflake. Only the decision to rewrite 8 year old Ember reports in React turned out to be correct.

The opposite of Musketering is Divide and Conquer. By taking on one problem at a time the team was able to succeed in ⅓ of the time, with a much simpler and less expensive solution.